I'm working over a task of estimating sparse label proportions, where the target is probability distribution $\textbf{q} \in \Delta^{K-1}$ and $\Delta^{K-1} := \{\textbf{p} \in \mathbb{R}^K \, | \, p_1 + \dots + p_K = 1 \}$ and the support is relatively small, that is, considering $\mathcal{Y} = \{ k \, | \, q_k >0\}$, then we have $|\mathcal{Y}| << K$.

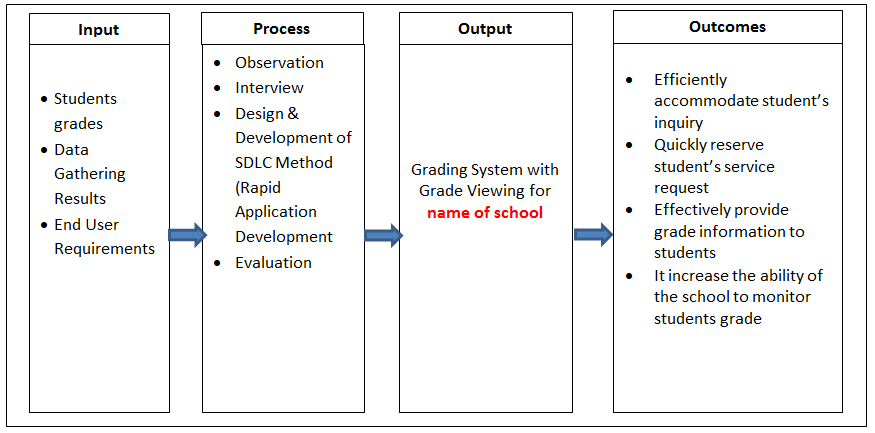

I was reading the following paper where they suggest several activation functions with a controllable degree of sparsity, as well as novel loss functions to be employed at training stage. In particular, given

where $\textbf{z} \in \mathbb{R}^K$ is the logit vector and $\bf{\eta}$ denotes the true underlying probability in $\Delta^{K-1}$, I'm wondering whether such loss could be suitable for my problem (stated above).

My main perplexity is that the second term represents a hinge loss which is typical of a classification setting, while my task isn't really about classification.